Executive Keynote: The Governance Gap

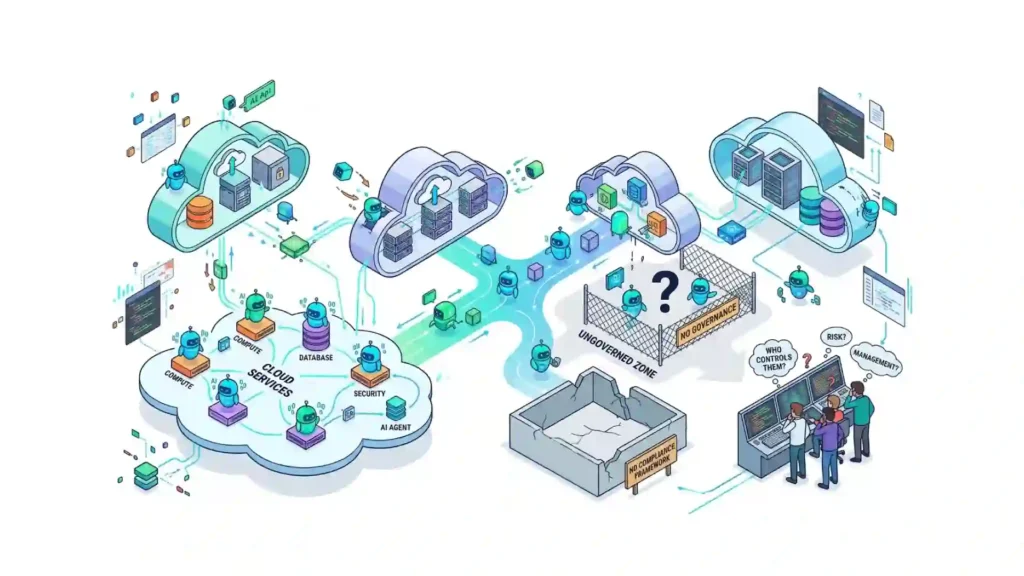

- The Problem: Traditional cloud governance frameworks are designed for deterministic human actions, but autonomous agents enterprise tools (Claude Code, Amazon Q) are now making non-deterministic infrastructure changes.

- The Risk: Shadow AI is creating “invisible” infrastructure, leading to massive agentic AI cloud risks including security breaches and unmonitored cloud cost management spikes.

- The Solution: CTOs must shift from “log-based” auditing to “signal-based” governance, implementing AI agent security through scoped identities and real-time policy checks.

Your cloud governance framework was built for humans.

Humans who request access, wait for approvals, and leave audit trails. Humans who, when they delete a resource or blow past a budget threshold, can be identified and held accountable.

That model is quietly breaking down.

AI agents – the autonomous, task – executing systems embedded in tools like Claude Code, Amazon Q, and GitHub Copilot – are making real decisions inside your cloud environment right now. They are provisioning resources, modifying configurations, merging code, and spinning up compute. And in most enterprise environments, there is no governance layer watching them do it.

This is not a distant risk. It is an active gap.

What Is an AI Agent, and Why Does It Matter for Cloud?

An AI agent is not a chatbot that waits for your next message. It is a system that can take a goal, break it into steps, and act – using APIs, tools, and cloud services – until the goal is complete or it runs out of context.

The distinction matters enormously from a governance standpoint.

Traditional software follows predetermined logic. You know what it does before it runs. An AI agent makes contextual decisions, accesses multiple data sources, and often operates with elevated privileges across your cloud infrastructure. It can read from S3, write to a database, call an external API, and trigger a deployment pipeline – all in a single session, with no human in the loop.

According to Gartner, 45% of organizations now have AI agents running in production environments – up from just 12% in 2023. Most of those organizations do not have a governance framework that was designed for agents.

The Three Governance Gaps That Are Already Costing You

1. Agents Have No Identity in Your IAM

When a developer provisions a resource, your identity and access management (IAM) system logs it. There is a name, a role, a timestamp. Accountability exists.

AI agents typically operate under shared service accounts, inherited credentials, or API keys that were scoped broadly because nobody expected them to be used autonomously. When an agent makes a change, the log shows a service account – not the agent, not the task, not the business context behind it.

You cannot govern what you cannot identify. And right now, most AI agents in enterprise cloud environments are effectively invisible to your IAM.

2. Shadow AI Is Building Shadow Infrastructure

Shadow IT was a problem you thought you solved with cloud policies and procurement controls. Shadow AI is a newer, faster – moving version of the same dynamic – except the stakes are higher.

When developers connect AI agents to corporate systems like GitHub, Slack, or AWS without going through IT, they create ungoverned access paths with elevated privileges. Traditional security tools were not designed to detect them.

A recent survey found that nearly 80% of enterprises have already experienced a negative AI – related data incident, and 13% report those incidents resulted in measurable financial, customer, or reputational damage.

The infrastructure these agents touch is your infrastructure. The blast radius, when something goes wrong, lands in your budget and your incident log.

3. There Is No Change Signal When an Agent Acts

Here is the scenario that should concern every CTO and CIO: an AI agent, acting on a legitimate instruction from a developer, modifies a security group rule to “unblock a dependency.” The change is correct in isolation. But it opens a port that your compliance policy explicitly prohibits. The agent did not know about the policy. Nobody told it to check.

Your change management process was not triggered because the agent did not file a ticket. Your cloud monitoring tool did not raise a signal because the change looked like a normal IAM event. The compliance violation sits undetected until your next audit – or until an adversary finds it first.

This is not hypothetical. AI agents have deleted entire codebases, approved buggy code, and generated unexpected cloud bills that surface weeks after the fact. The problem is not that the agent was malicious. The problem is that no governance layer was watching the signal.

Why Your Existing Cloud Governance Framework Is Not Enough

Most enterprise cloud governance frameworks are built around three assumptions:

- The actor is a human or a deterministic system

- Changes come through a request workflow

- Accountability can be traced to a named identity

AI agents break all three.

They are not deterministic – they make contextual decisions that may differ based on the prompt, the model version, or the state of the conversation. They do not go through request workflows unless you explicitly force them to. And their identity in your logs is usually a service account or API token, not the agent itself.

OWASP published its first formal taxonomy of agentic AI risks in late 2025, covering goal hijacking, tool misuse, identity abuse, memory poisoning, and cascading failures. These are not theoretical attack vectors. They are documented patterns that emerge when autonomous systems interact with enterprise cloud infrastructure without adequate controls.

Your cloud governance framework needs to evolve to account for agents as a new category of actor – one that requires its own registry, its own access policies, and its own change signal.

What AI Agent Cloud Governance Actually Looks Like

Governing AI agents in the cloud does not mean slowing them down. It means making their actions visible, bounded, and auditable. Here is what that requires in practice:

An agent registry. Every AI agent that can interact with your cloud infrastructure should be catalogued – what it can access, what it can do, who owns it, and what policy governs it. Untracked agents are shadow infrastructure by definition.

Agent – scoped identities. Agents should not inherit broad service account credentials. Each agent should have a distinct identity with the minimum permissions required for its task – and those permissions should be revoked when the task is complete. This is the principle of least privilege applied to autonomous systems.

Policy – aware execution. Before an agent takes an action – modifying a resource, triggering a deployment, changing a configuration – it should be able to check that action against your cloud governance policies. This is not about blocking agents. It is about giving them the context they need to act safely.

Change signal, not just change log. A log entry tells you what happened after the fact. A change signal tells you what is happening now, in context – what changed, what it might mean, what policy it touches. For AI agent cloud governance to work, you need the signal layer, not just the audit trail.

Ownership accountability. Research shows that an AI agent’s ownership changes hands an average of four times in its first year – from executive sponsor to AI team to cloud operations to business unit. Every handoff is a governance gap. Ownership must be formally tracked and transferred, not assumed.

The Competitive Dimension

There is a reason this conversation is urgent and not just important.

Organizations that deploy AI agents without governance are taking on technical debt and security exposure simultaneously. When the incident happens – and for a growing number of enterprises, it already has – the cost is not just remediation. It is audit findings, compliance exposure, and the erosion of the trust that cloud decision – making depends on.

On the other side, organizations that build AI agent governance into their cloud foundation now are establishing a capability that will compound in value as agentic AI becomes the default model for cloud operations.

Governance maturity, according to the Cloud Security Alliance’s 2025 research, is the single strongest predictor of AI readiness across the enterprise. The organizations with the most confident, capable AI programs are not the ones who deployed fastest. They are the ones who governed best.

What This Means If You Are a CTO or CIO Today

You do not need to restrict AI agent usage to govern it. But you do need to know what agents are operating in your environment, what they can access, and what signals indicate something has gone wrong.

Start with visibility. Audit your current environment for AI agents that interact with cloud infrastructure – directly or through APIs and MCP connections. Identify what credentials they use and what policies, if any, govern their actions.

Then ask the harder question: when an AI agent makes a change to your cloud infrastructure, does your governance layer see a signal or just a log entry?

Because the gap between those two things is exactly where your cloud risk lives right now.

Cloudeva.ai is built to surface the signals that matter in multi – cloud environments – including the changes that AI agents make, the context behind them, and the confidence your team needs to act. Because sharp decisions in the cloud start with knowing what’s actually changing.

Related reads:

- Your Cloud Governance Framework Is Missing a Layer

- What Is a Cloud Signal? And Why It’s Not the Same as an Alert

- Cloud Cost Management in the Age of Autonomous Agents

Frequently Asked Questions (FAQ)

What is AI agent cloud governance?

It is the framework of policies, identities, and monitoring tools used to manage autonomous AI systems that have the authority to modify cloud infrastructure. It ensures these agents follow security, cost, and compliance rules.

How do AI agents create security risks in the cloud?

Agents often use over-privileged service accounts. If an agent’s “goal” is hijacked or it misinterprets a command, it can accidentally open security ports, delete data, or expose sensitive S3 buckets.

Can existing IAM tools manage AI agents?

Standard IAM tools can track which service account was used, but they fail to capture the intent or the specific AI agent responsible. Effective AI agent security requires identities scoped specifically to the agent and the task.

What is Shadow AI in cloud computing?

Shadow AI refers to the use of unauthorized AI agents or LLM-integrated tools by employees without the knowledge or approval of the IT/Security department, often leading to ungoverned “shadow infrastructure.”